Robotics

Autonomous Bio-mimetic Swarm: Integrated Perception & Edge Control

(Ph.D Minor, Harvard Univerity)

Designed and implemented an integrated perception-to-action module for miniature, bio-mimetic underwater robots. To overcome the lack of real-world datasets, I utilized Generative AI to produce high-fidelity synthetic underwater environments for model training. The resulting TensorFlow Lite computer vision model was heavily optimized for the Google Coral Edge TPU, enabling real-time classification of obstacles, peers, and adversarial targets. This perception layer directly informs the robot’s trajectory planning, facilitating autonomous enemy evasion and decentralized collision avoidance in high-noise fluid environments.

C++/Python

Tensorflow Lite

Post-Training Quantization

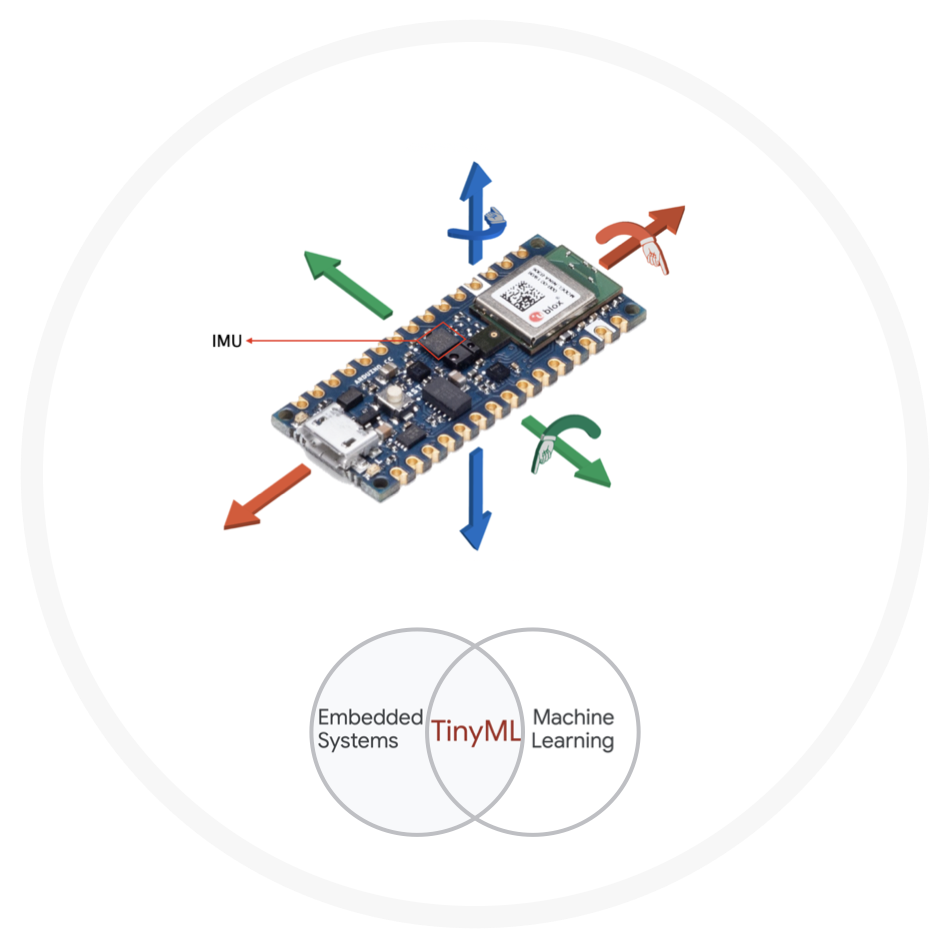

Sensor Fusion(IMU/Pressure)

Google Coral

Generative AI

Robotics

Autonomous Navigation & Real-Time Collision Avoidance

(Internship, Disney Research)

Orchestrated the physical realization of collision-free autonomous driving trajectories for miniature vehicular platforms. I engineered a robust control layer to translate high-level global path planning into precise steering and acceleration inputs. By bridging the gap between trajectory generation and low-level hardware actuation, I ensured the vehicles could navigate complex environments and execute real-time reactive maneuvers to maintain safe distance and avoid obstacles with high fidelity.

PID Control

Arduino

Kinematics

Kalman Filter

Edge AI

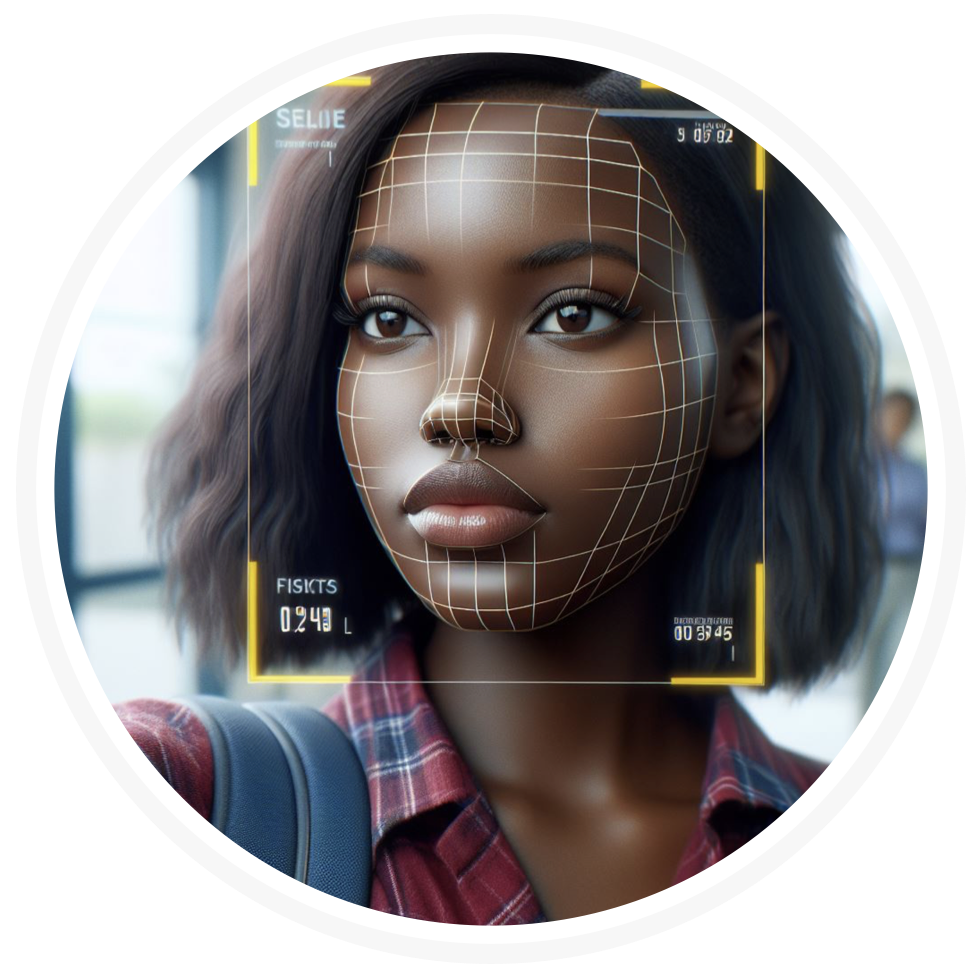

Hardware-Aware AI: High-Efficiency Clinical Screening at the Edge

(Lead Engineer, UN WFP)

Architected and deployed a mobile Computer Vision (CV) system for real-time childhood malnutrition screening in off-grid, low-resource environments. To enable clinical-grade inference on mobile devices and Google Coral Edge TPUs, I implemented a rigorous optimization pipeline including Full Integer (INT8) Quantization and Structured Pruning. This reduced model latency and memory footprint by over 10x without sacrificing diagnostic accuracy, successfully bridging the gap between high-compute deep learning and field-ready hardware.

TensorFlow Lite

INT8 Quantization

Edge TPU

Python

Computer Vision

Scalable 3D Reconstruction & Volumetric Intelligence

(Ph.D Major, Harvard University)

Developed a high-performance software ecosystem for the automated reconstruction of neural circuits from massive 3D Electron Microscopy datasets. My research pioneered the optimization of 3D Convolutional Neural Networks (CNNs) for volumetric processing and the design of Recurrent Neural Networks (RNNs) to maintain spatial consistency across biological structures. Beyond segmentation, I engineered a graph-based analytics framework that automatically detects topological errors and facilitates rapid proofreading of neural sub-graphs, drastically reducing the manual labor required for large-scale connectomics.

PyTorch

3D CNNs

RNNs

Volumetric Data

Quantitative

Predictive Intelligence & Semantic Search for Capital Markets

(AI Consultant, CapConnect+)

Architected a high-performance data engine for the commercial paper market to analyze and forecast investment patterns across a decade of financial data. I integrated Vector Search via Google Vertex AI to enable semantic retrieval, allowing users to identify complex historical risk profiles and market correlations that keyword-based systems miss. By combining Time-Series Forecasting with a modern data stack, I transformed raw transactional records into a strategic analytics platform capable of discerning market shifts and enterprise-level investment trends.

Tensorflow

Vertex AI

Time-Series

Vector Search

Prophet

Quantitative

Immersive Autonomy: Quantifying Human Trust in Self-Driving Systems

(Research Fellow, Harvard Business School)

Designed and engineered a high-fidelity VR simulation platform for Harvard Business School to quantify human trust during autonomous vehicle (AV) transitions. Using Oculus VR (Meta Quest), I developed complex self-driving scenarios where users interacted with various levels of vehicle autonomy. The system was designed to measure "over-trust" and "under-trust" behaviors in real-time, providing empirical data on how users react to automated edge cases, system failures, and collision-avoidance maneuvers in a fully immersive, zero-risk environment.

HCI

Occulus VR

Data Analysis

Unreal Engine 5

Statistical Modeling